Building intelligent systems

that ship securely.

AI systems that prove they work. Cloud platforms hardened from day one. DevSecOps pipelines that never skip a scan.

Systems in Production

Built, validated, and running

AI systems that prove they work. Cloud platforms hardened from day one. DevSecOps pipelines that never skip a scan.

Systems in Production

Built, validated, and running

Technology professional building at the intersection of AI, security, and infrastructure.

I build systems that work autonomously and reduce workload by design. Every system I ship follows true DevSecOps procedures: continuously validated, scanned, and hardened. Even rapid prototypes get full security scanning before they deploy.

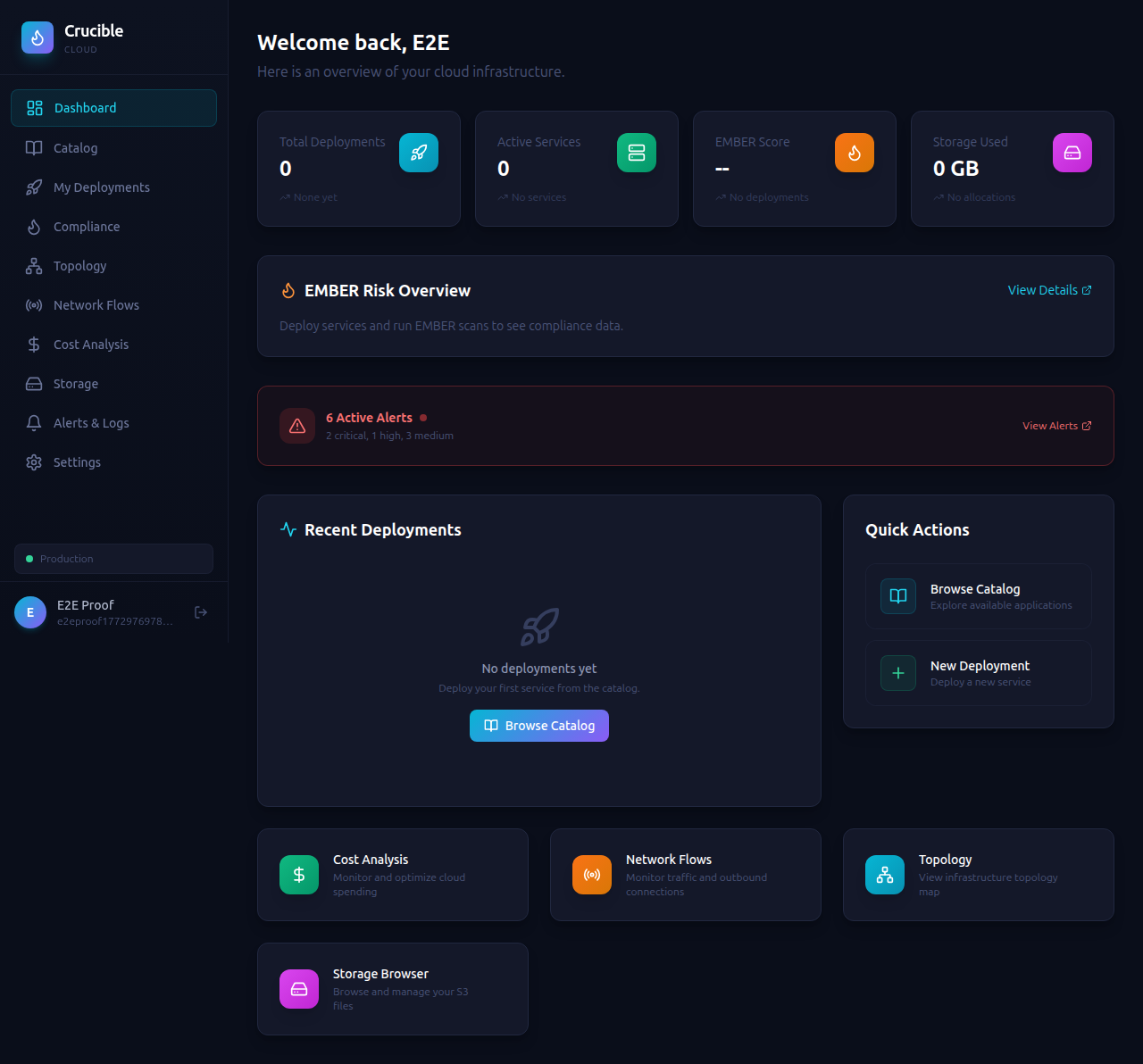

Currently leading development on EMBER, an autonomous AI companion that runs 24/7 on edge devices and mathematically proves its own improvement, and Crucible Cloud, a platform for deploying and security-auditing any GitHub repository in isolated sandboxes.

I believe the best software is secure by default, observable in production, and built to run without hand-holding.

End-to-end systems engineering, from architecture through production hardening.

Multi-agent orchestration with self-improvement pipelines. Systems that detect their own gaps, generate new capabilities, and mathematically prove they got better. Not by asking an LLM to grade itself, but through Wilson score intervals and hard statistical thresholds.

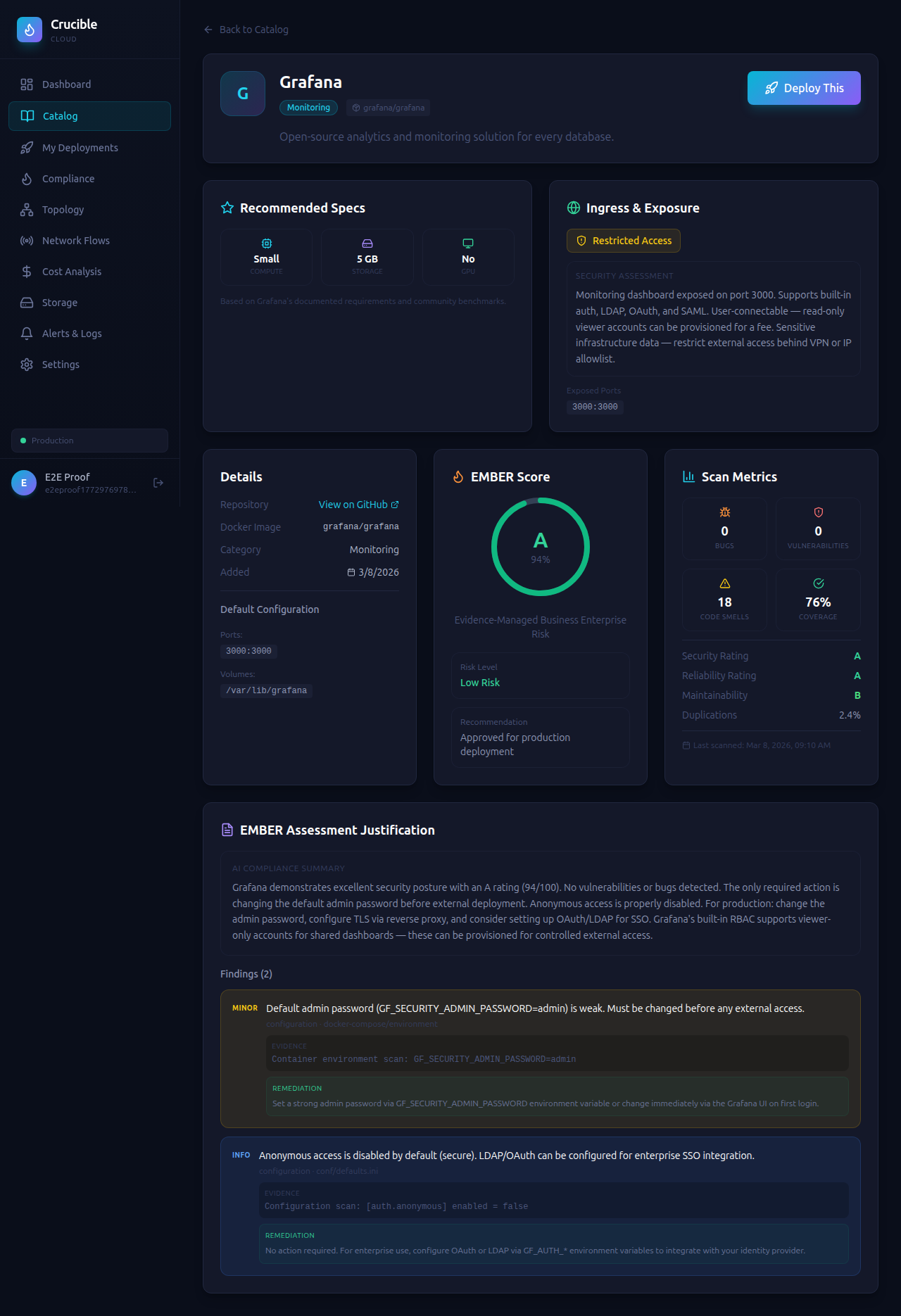

Security scanning built into every step. SonarQube SAST, Trivy container scanning, CrowdSec threat intelligence, fail2ban intrusion prevention. No code ships without passing the scan. No exceptions.

Isolated sandbox environments for deploying and evaluating any codebase. Automated compliance scoring, dependency auditing, and production readiness assessment. Built for teams that need to move fast without cutting security corners.

Production systems running on Raspberry Pi clusters with systemd hardening, watchdog timers, blue/green deployments, and out-of-process self-healing. Real infrastructure at the edge, not lab demos.

Model Context Protocol servers that give AI agents live access to databases, knowledge bases, and APIs. Secure by design with scoped tokens, secret scrubbing, table blocklists, and full audit logging on every tool call.

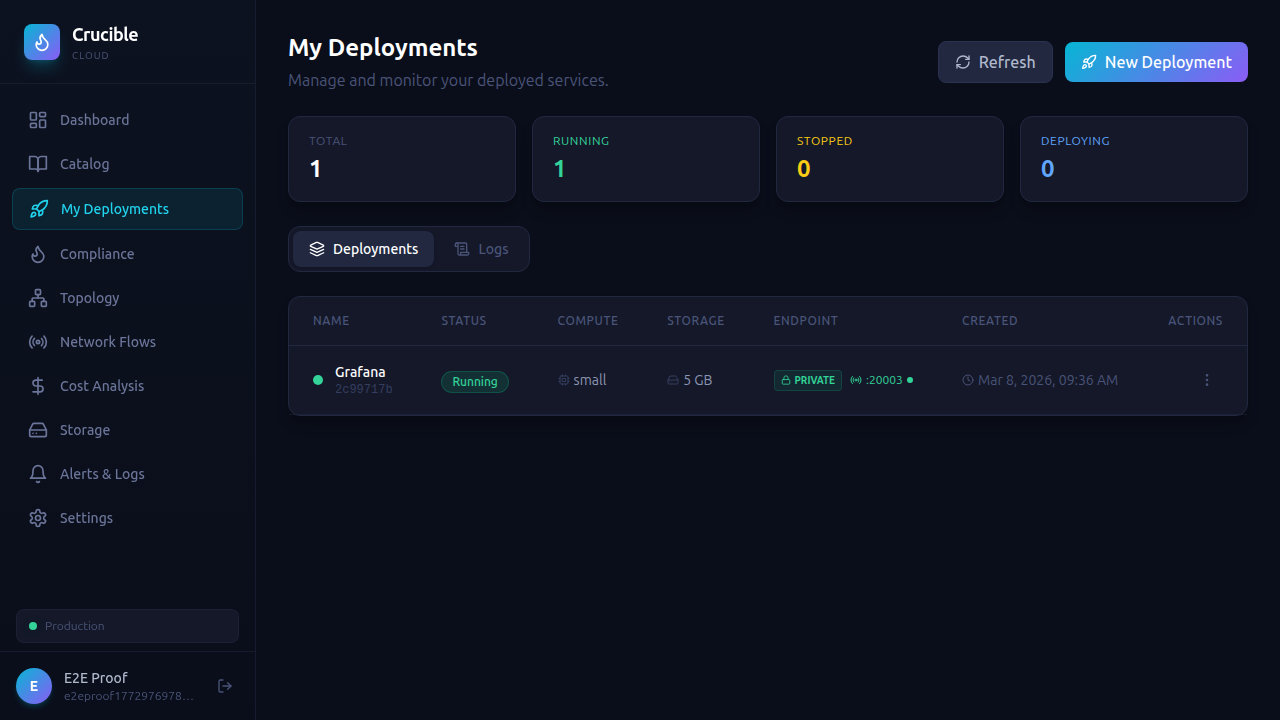

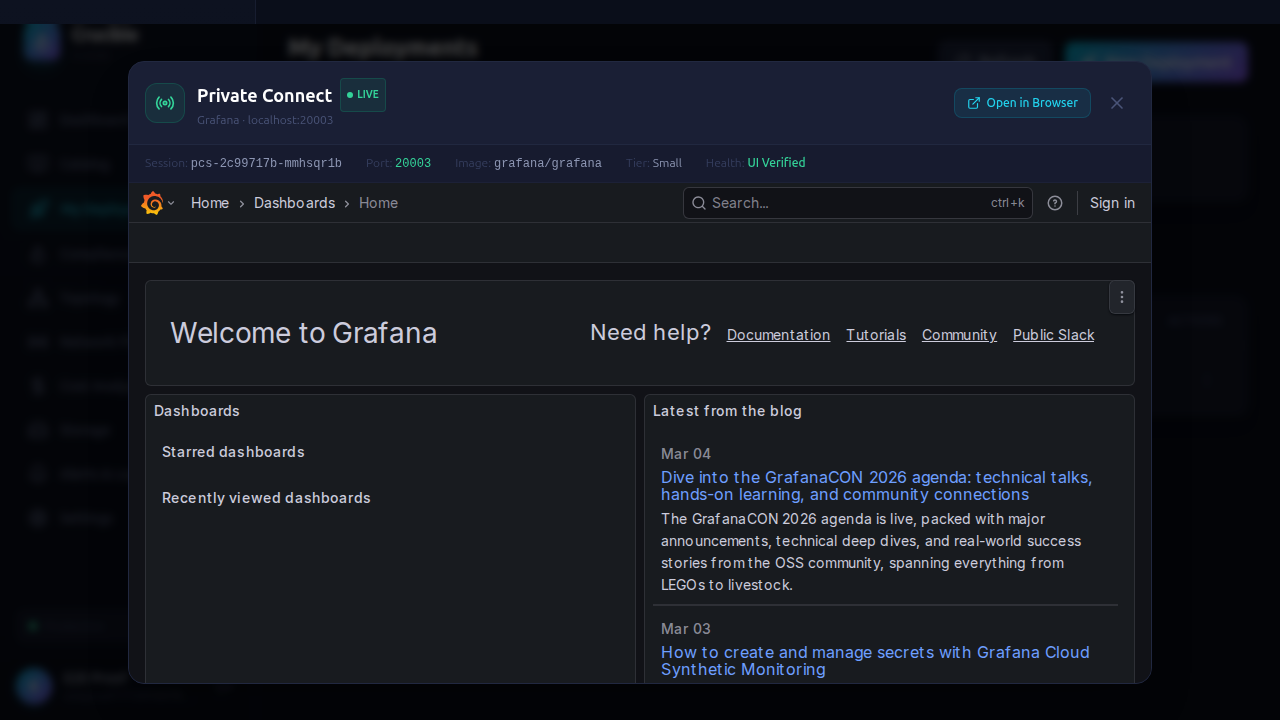

Prometheus metrics, Grafana dashboards, Uptime Kuma health checks, and custom security posture monitoring. Systems that know when they're sick and can tell you exactly what's wrong.

Systems built from scratch, shipping in production.

AI Companion

AI Companion

A self-evolving AI companion that runs 24/7 on a Raspberry Pi 5. EMBER doesn't just improve herself. She proves it with math, not AI opinion. Every self-generated improvement is measured using real statistics: Wilson score confidence intervals, P50/P95 latency from raw execution data, and hard degradation thresholds with automatic rollback. No LLM grades its own homework here.

Cloud Platform

Cloud Platform

A self-service cloud platform for deploying any GitHub repository into isolated, security-audited sandboxes. One-click deployments with built-in compliance scoring, cost analysis, and network monitoring. Per-stack build agents auto-detect the tech stack and generate the right build pipeline.

Most AI systems that claim "self-improvement" use the LLM to score whether it got better. That's the AI grading its own homework. We don't do that.

Most AI "self-improvement" systems ask the model: "did you get better?" The model says yes. The score goes up. Nobody checks if it actually did. These scores reflect what the model thinks happened, not what actually happened. They're unfounded, unreproducible, and indistinguishable from hallucination.

Every improvement EMBER deploys is measured with real statistics: Wilson score confidence intervals for success rates (not raw percentages), P50/P95 latency from actual execution data (not averages), and hard degradation thresholds. If quality drops 10% or latency increases 30%, the change auto-rolls back. No AI judgment call. Just math.

Runs automatically on every deploy. Covers routing accuracy, domain classification, goal tracking, voice quality, and secret scrubbing. Regressions create backlog tickets automatically. Current baseline: 100% pass rate.

Scans EMBER's own output for admissions of failure like "I'm unable to fetch", placeholder text, or redirecting the user to check manually. Each detected gap auto-creates a Kanban story for the self-improvement pipeline to fix.

90% of routing decisions use free local models (Ollama on GPU). Only when the local model's confidence is low or the routing evaluator detects a wrong domain does the system escalate to a paid API with MCP tools for live database access. Every escalation is logged with cost tracking.

Tools and platforms I build with daily.

Interested in working together or have questions about these systems? Reach out.